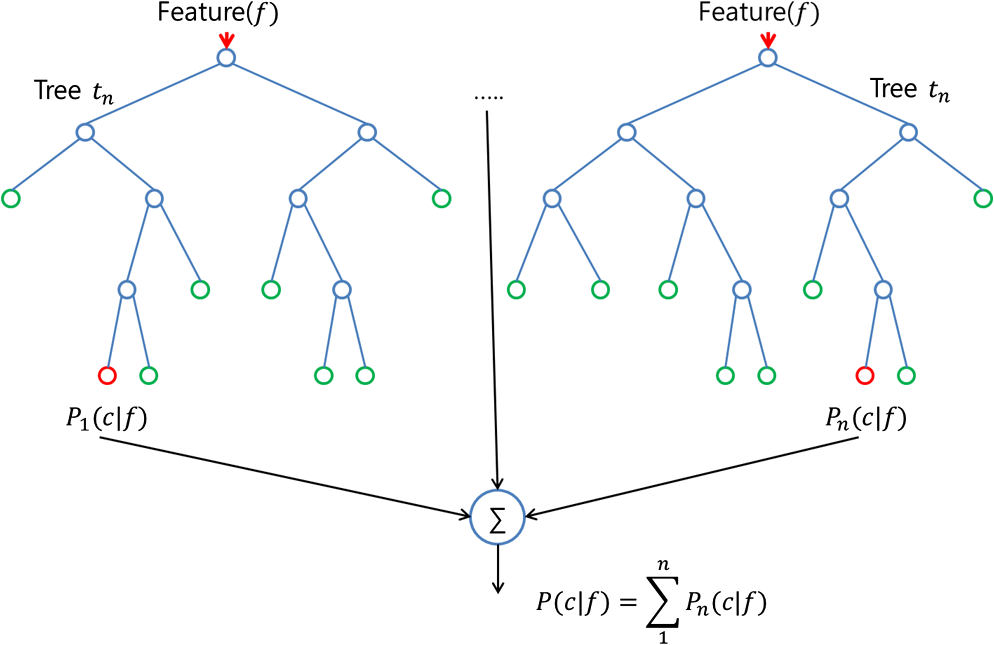

The complexity of a random forest grows with the number of trees in the forest, and the number of training samples we have. To summarize, random forests are much simpler to train for a practitioner it's easier to find a good, robust model. Although, there are multi-class SVMs, the typical implementation for mult-class classification is One-vs.-All thus, we have to train an SVM for each class - in contrast, decision trees or random forests, which can handle multiple classes out of the box. Also, we can end up with a lot of support vectors in SVMs in the worst-case scenario, we have as many support vectors as we have samples in the training set. The more trees we have, the more expensive it is to build a random forest. Training a non-parametric model can thus be more expensive, computationally, compared to a generalized linear model, for example. This is called the linearly separable case.Both random forests and SVMs are non-parametric models (i.e., the complexity grows as the number of training samples increases). At this moment, we consider situations that all data points could be correctly classified by a line, which is clearly the case in Figure 117.

This is the maximum margin principle invented in SVM.Ĭonsider a binary classification problem as shown in Figure 118. In summary, while all the models shown in Figure 117 meet the constraints (i.e., perform well on the training data points), this is just the bottom line for a model to be good, and they are ranked differently based on an objective function that maximizes the margin of the model. The other two models have larger margins, and Model \(2\) is the best because it has the largest margin. To reduce risk, we should have the margin as large as possible. The concept of margin is shown in Figure 118. In other words, the line of Model \(3\) is too close to the data points and therefore lacks a safe margin. This makes Model \(3\) bear a risk of misclassification on future unseen data: the locations of the existing data points provide a suggestion about where future unseen data may locate but this is a suggestion, not a hard boundary.įigure 118: The model that has a larger margin is better-the basic idea of SVM Unlike the other two, Model \(3\) is close to a few data points. Common sense tells us that Model \(3\) is the least favorable. And the \(3\) models all perform well, while we hesitate to say that the \(3\) models are equally good. The constraints here are obvious: the models should correctly classify the data points. This is a basic assumption in machine learning.įigure 117: Which model (i.e., here, which line) should we use as our classification model to separate the two classes of data points?įigure 117 shows an example of a binary classification problem.

The constraints, on the other hand, guard the bottom line: the learned model needs to at least perform well on the training data so it is possible to perform well on future unseen data 169 169 The testing data, while unseen, is assumed to be statistically the same as the training data. Besides the likelihood principle, researchers have been studying what else quality a model should have and what objective function we should optimize to enhance this quality of the model. They are developed based on the likelihood principle. (16), (28), and (40), are examples of objective functions. The objective function corresponds to a quality of the learned model that could help it succeed on the unseen testing data. It is about quality improvement.Ī learning algorithm has an objective function and sometimes a set of constraints. Chapter 7 introduces learning methods that concern how to learn better from the data. In short, Chapter 5 introduced evaluative methods that concern if a model has learned from the data. While all models could overfit a dataset, these methods aim to reduce risk of overfitting based on their unique modeling principles. The two methods are the Support Vector Machine ( SVM) and Ensemble Learning 168 168 The random forest model is a typical example of ensemble learning. This chapter introduces two methods that aim to build a safeguard mechanism into the learning algorithms themselves.

Chapter 7, taking on a process-oriented view of the issue of overfitting, focuses on performances of a learning algorithm 167 167 Algorithms are computational procedures that learn models from data. It focused on fair evaluation of the performances of a specific model. In Chapter 5 we have introduced the concept of overfitting and the use of cross-validation as a safeguard mechanism to help us build models that don’t overfit the data. Chapter 7 revisits learning from a perspective that is different from Chapter 5.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed